Scrapy框架与Selenium我们前面都介绍过,本次给大家分享的是两者如何配合使用。如果喜欢不要忘记分享、点赞哦!

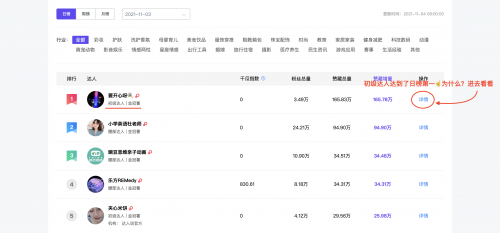

本次我们获取千瓜的数据:http://www.qian-gua.com/rank/category/

不好意思!接下来这个页面你会很郁闷!

我们想获取更多的日榜达人的数据怎么操作?借助selenium哦!为了获取更多我们结合Scrapy完成此次的爬虫任务。

任务要求:

Python3环境

Scrapy框架

Selenium 可以参照https://selenium-python-zh.readthedocs.io/en/latest/

谷歌浏览器+ChromeDriver

ChromeDriver的下载地址:https://chromedriver.storage.googleapis.com/index.html

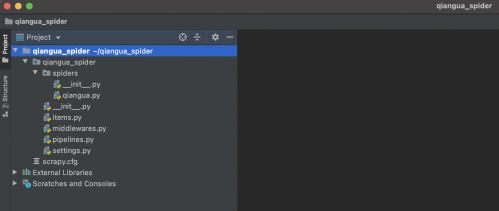

首先我们创建项目:scrapy startproject qiangua_spider

然后进入qiangua_spider目录下,执行:scrapy genspider qiangua qian-gua.com

在Pycharm中打开创建的项目,目录结构如下:

修改settings.py文件ROBOTSTXT_OBEY 为 False

编写items.py文件内容如下:

代码如下:

import scrapy

class QianguaSpiderItem(scrapy.Item):

# define the fields for your item here like:

name = scrapy.Field()

level = scrapy.Field()

fans = scrapy.Field()

likeCollect = scrapy.Field()

编写spider.py爬虫文件,如果不登陆我们是无法看的更多的小红书达人们的账号排行、涨粉等信息。如果想看的更多则需要登陆才可以。

流程与思路:

先进入http://www.qian-gua.com/rank/category/

点击右上角的登陆(此过程需要有千瓜的账号才可以)

有两种登陆方式,我们可以选择微信扫码登陆,或者手机登陆(本案例采用手机登陆)

获取登陆的Cookies

保存Cookies并访问

http://api.qian-gua.com/Rank/GetBloggerRank?pageSize=50&pageIndex=页码数&dateCode=20211104&period=1&originRankType=2&rankType=2&tagId=0&_=时间戳

得到json数据并解析数据

在上述的流程中1-4,我们都是结合selenium完成的。

代码如下

import json

import time

import scrapy

from selenium import webdriver

from qiangua_spider.items import QianguaSpiderItem

class QianguaSpider(scrapy.Spider):

name = 'qiangua'

allowed_domains = ['www.qian-gua.com']

# start_urls = ['http://www.qian-gua.com/rank/category/']

headers = {

'Origin': 'http://app.qian-gua.com',

'Host': 'api.qian-gua.com',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/14.1.2 Safari/605.1.15'

}

def start_requests(self):

driver = webdriver.Chrome()

url = 'http://www.qian-gua.com/rank/category/'

driver.get(url)

driver.implicitly_wait(5)

driver.find_element_by_xpath('//div[@class="loggin"]/a').click()

time.sleep(2)

driver.find_element_by_xpath('//div[@class="login-tab"]/span[2]').click()

driver.find_element_by_xpath('//input[@class="js-tel"]').send_keys('15010185644')

driver.find_element_by_xpath('//input[@class="js-pwd"]').send_keys('qiqining123')

driver.find_element_by_xpath('//button[@class="btn-primary js-login-tel-pwd"]').click()

time.sleep(2)

cookies = driver.get_cookies()

driver.close()

jsonCookies = json.dumps(cookies) # 通过json将cookies写入文件

with open('qianguaCookies.json', 'w') as f:

f.write(jsonCookies)

print(cookies)

with open('qianguaCookies.json', 'r', encoding='utf-8') as f:

listcookies = json.loads(f.read()) # 获取cookies

cookies_dict = dict()

for cookie in listcookies:

# 在保存成dict时,我们其实只要cookies中的name和value,而domain等其他都可以不要

cookies_dict[cookie['name']] = cookie['value']

# 更多的数据需要开通会员才可以,我们当前获取了top30的数据

for page in range(1, 2):

t = time.time()

timestamp = str(t).replace(".", '')[:13]

data_url = "http://api.qian-gua.com/Rank/GetBloggerRank?pageSize=50&pageIndex=" + str(

page) + "&dateCode=20211104&period=1&originRankType=2&rankType=2&tagId=0&_=" + timestamp

yield scrapy.Request(url=data_url, cookies=cookies_dict, callback=self.parse, headers=self.headers)

def parse(self, response):

rs = json.loads(response.text)

if rs.get('Msg')=='ok':

blogger_list = rs.get('Data').get("ItemList")

for blogger in blogger_list:

name = blogger.get('BloggerName')

level = blogger.get('LevelName','无')

fans = blogger.get('Fans')

likeCollect = blogger.get('LikeCollectCount')

item = QianguaSpiderItem()

item['name'] = name

item['level'] = level

item['fans'] = fans

item['likeCollect'] = likeCollect

yield item

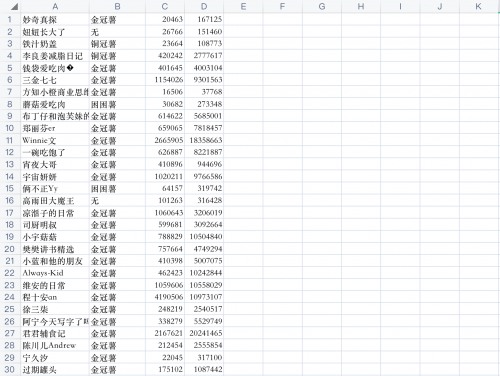

最后我们添加pipelines.py保存解析的数据,我们是将数据保存到csv文件中

代码如下:

import csv

from itemadapter import ItemAdapter

class QianguaSpiderPipeline:

def __init__(self):

self.stream = open('blogger.csv', 'w', newline='', encoding='utf-8')

self.f = csv.writer(self.stream)

def open_spider(self, spider):

print("爬虫开始...")

def process_item(self, item, spider):

data = [item.get('name'), item.get('level'), item.get('fans'), item.get('likeCollect')]

self.f.writerow(data)

def close_spider(self, spider):

self.stream.close()

print('爬虫结束!')

务必记得将settings.py中pipelines部分的代码注释取消掉

ITEM_PIPELINES = {

'qiangua_spider.pipelines.QianguaSpiderPipeline': 300,

}

执行爬虫

scrapy crawl qiangua

结果很令我们满意

京公网安备 11010802030320号

京公网安备 11010802030320号